オープンソースの音声認識モデルのWhisperを使うと、手軽に高品質な音声認識(文字起こし)が可能となる。今回は、Whisperを利用して簡単に使えるリアルタイム音声認識ツールを作ってみよう。

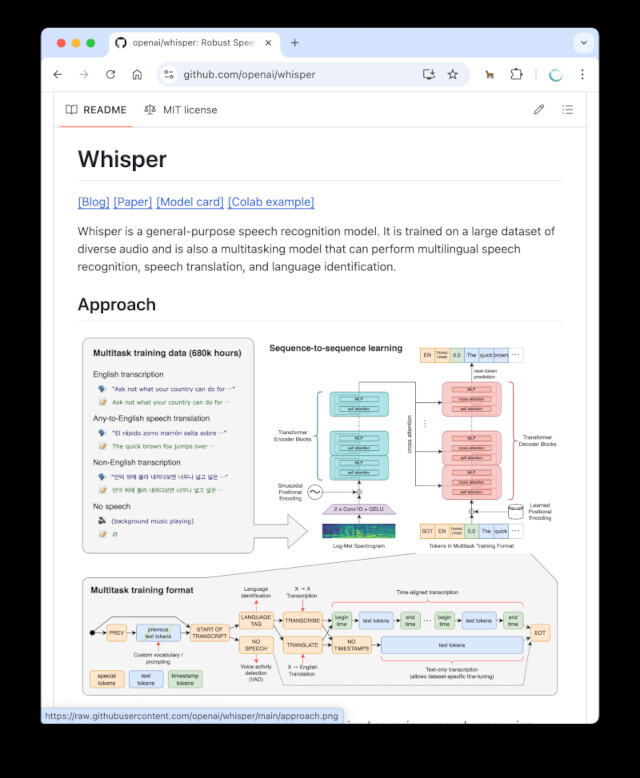

音声認識モデルのWhisperとは

「Whisper」は、ChatGPTで有名なOpenAIが公開しているオープンソースの音声認識モデルだ。高精度な音声認識モデルで、英語だけでなく日本語を含めた多言語の音声をテキストに変換できる。ノイズの多い環境でも高い認識精度を誇り、議事録作成や字幕生成、自動文字起こしなどに活用されている。

Pythonから簡単に扱える点も魅力で、柔軟な応用が可能となっている。そこで、今回は、Pythonでリアルタイムの音声認識ツールを作ってみよう。

音声認識に使うライブラリをインストールしよう

ターミナル(WindowsならPowerShell、macOSならターミナル.app)を起動して、次のコマンドを実行しよう。ここでは、Pythonの仮想環境venvを利用してトラブルを避けつつ、環境を構築する。

# (1) venvでPython仮想環境を作成する

python -m venv venv

# (2) venvを有効にしよう - Windowsの場合

Set-ExecutionPolicy RemoteSigned -Scope CurrentUser # ローカルでPSを有効に

.\venv\Scripts\Activate.ps1 # 環境を有効に

# (2) venvを有効にしよう - macOS/Linuxの場合

source venv/bin/activate

brew install libsndfile # ライブラリをインストール

# (3) ライブラリのインストール

pip install transformers torchaudio sounddevice soundfile

なお、筆者はPythonのバージョンは3.12.10で確認した。もし、うまくプログラムが動かない場合、Pythonのバージョンを切り替えて実行すると良いだろう。

この手のライブラリは、Pythonのバージョンを最新のものに更新すると、動かなくなる場合が多い。原稿執筆時点で、最新のPythonの安定版は、3.13.5だが、AI関連のライブラリをインストールして利用する場合は、そこから0.1か0.2を引いたバージョン(3.12.xか3.11.x)を利用すると良い。

加えて、Windowsでは、FFmpegのインストールが必須となっている。こちら( https://github.com/BtbN/FFmpeg-Builds/releases )から、Windows用のバイナリをダウンロードして、環境変数PATHにbinフォルダを追加する必要がある。以下の手順で作業しよう。

1. 上記より「ffmpeg-master-latest-win64-gpl-shared.zip」を選んでダウンロードしよう。ZIPファイルを解凍して、アーカイブの内容を、例えば、c:¥ffmpeg以下にコピーしよう。

2. Windowsメニューをクリックして「環境変数」を検索して、環境変数の編集パネルを表示する。

3 . 「環境変数」の編集にて、Pathの項目をダブルクリックして、そこに「c:¥ffmpeg¥bin」と追記して「OK」ボタンを押す

一番簡単なプログラムを試してみよう

さて、無事にライブラリがインストールできたら、次に、プログラムを作っていこう。リアルタイムの音声認識ツールを作る上で、必要となるのが、録音することと、音声認識を行うことだ。

最初にPCのマイクの音を拾って、録音するプログラムを作ってみよう。以下のプログラムは、プログラムを実行して、5秒間録音して「output.wav」に保存するプログラムだ。

"""PCのマイクを使って録音してWAVファイルに保存するプログラム"""

import sounddevice as sd

import numpy as np

import torchaudio

import torch

# 録音設定 --- (*1)

SAMPLE_RATE = 16000 # サンプリングレート

DURATION = 5 # 録音時間(秒)

OUTPUT_FILE = "output.wav" # 保存するWAVファイル名

def record_audio(): # --- (*2)

"""マイクから音声を録音してWAVファイルに保存する"""

print("録音開始...")

audio = sd.rec(int(DURATION * SAMPLE_RATE), samplerate=SAMPLE_RATE, channels=1, dtype='float32')

sd.wait() # 録音終了を待つ --- (*3)

print("録音終了。保存中...")

# Numpyの配列からテンソルに変換してtorchaudioで保存 --- (*4)

audio_tensor = torch.from_numpy(audio.T)

torchaudio.save(OUTPUT_FILE, audio_tensor, SAMPLE_RATE)

print(f"WAVファイルとして保存しました: {OUTPUT_FILE}")

if __name__ == "__main__":

record_audio()

プログラムを実行するには、上記のプログラムを「rec.py」という名前で保存して、ターミナルから次のコマンドを実行しよう。すると、録音が始まり、5秒後に「output.wav」を出力する。

python rec.py

プログラムを確認してみよう。(*1)では、録音に関する設定を記述する。音声認識モデルのWhisperは、サンプリングレートが16,000Hzの音声データを対象にするので、この値は変更しないようにしよう。

(*2)では、マイクからの音声を録音し、(*3)のwaitメソッドで録音終了を待機するようにする。

録音が完了した後、(*4)ではNumpy配列をPyTorchのテンソルに変換してファイルに保存する。

WAVファイルを音声認識するプログラム

次に、WAVファイル「output.wav」を読み込んで音声認識を行って、テキストとして出力するプログラムを確認しよう。

import torch

from transformers import pipeline

# 使用するWhisperモデルと設定 --- (*1)

MODEL = "openai/whisper-small" # 必要に応じて変更

LANGUAGE = "japanese" # 日本語を利用する

WAV_FILE = "output.wav" # 音声ファイル名

# デバイス判定(GPU対応) --- (*2)

DEVICE = "cpu"

if torch.cuda.is_available():

DEVICE = "cuda"

elif torch.backends.mps.is_available():

DEVICE = "mps"

print("使用デバイス:", DEVICE)

# パイプラインの初期化 --- (*3)

pipe = pipeline(

"automatic-speech-recognition",

model=MODEL,

device=DEVICE)

# 音声認識の実行 --- (*4)

result = pipe(

WAV_FILE,

generate_kwargs={

"language": LANGUAGE,

"task": "transcribe"

})

# 結果の表示 --- (*5)

print("認識結果:")

print(result["text"])

プログラムを実行するには、上記のプログラムを「wav2text.py」という名前で保存して、下記のコマンドを実行しよう。すると、先ほど録音した音声「output.wav」を音声認識して、テキストを出力する。

python wav2text.py

プログラムのポイントを確認しよう。今回のプログラムは、AIで人気のパッケージtransformersを利用して、音声認識を行うものとなっている。

(*1)では、Whisperの音声認識に使うモデルや言語を指定する。

(*2)では、NVIDIAのCUDAが使える場合に、GPUを使うようにデバイス判定をしている。なお、「mps」というのは、macOSでAppleシリコンを使っている場合で、これを指定するとmacOSでの推論能力が大幅に向上する。

(*3)は、Whisperの音声認識モデルを読み込んで、パイプラインを初期化する。ここでは、(*1)で指定した、あまり能力の高くない"openai/whisper-small"というモデルを利用して音声認識を行う準備を行っている。もし、PCの能力が高い場合には、変数MODELを「openai/whisper-large-v3」などと変更すると、認識精度が大幅に向上する。

(*4)では、音声認識を実行して(*5)で結果を画面に出力する。

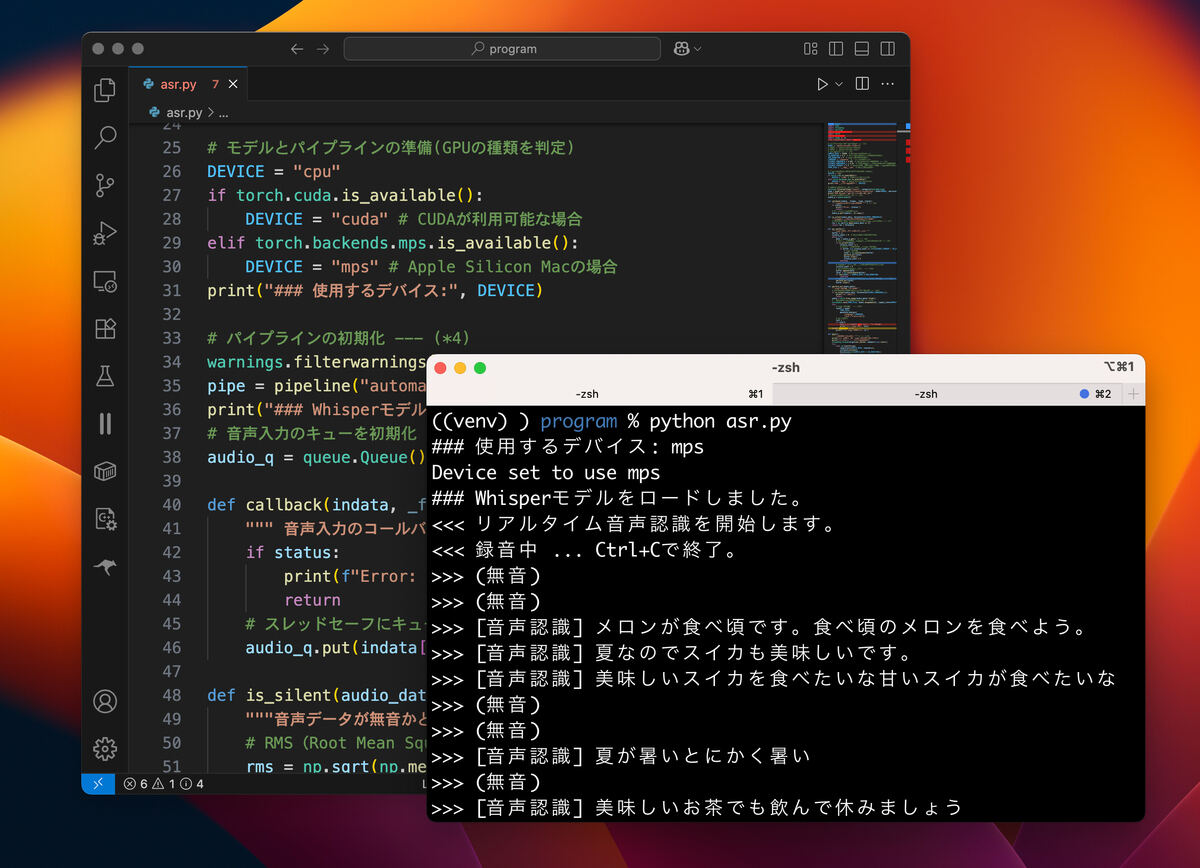

リアルタイム音声認識のツールのプログラム

上記のプログラムが、正しく動くことが確認できたら、リアルタイムの音声認識ツールを作ってみよう。なお、今回作ったプログラムは123行ある。リアルタイムの音声認識ツールのプログラムとしては、とても短いものの、コラムで紹介するには、少し長い。

そこで、プログラム全体をこちらのGist( https://gist.github.com/kujirahand/3ec6b35ba27f58b1ac596cf8a2db9447 )にアップロードした。完全版はそちらを参照してほしい。それでは、プログラムを少しずつ抜粋して、解説していこう。

# 利用する音声認識モデルを指定 --- (*1)

MODEL = "openai/whisper-large-v3"

# MODEL = "openai/whisper-medium"

# MODEL = "openai/whisper-small"

なお、以下の部分は、オーディオや音声認識の設定を指定している。今回は、リアルタイムの音声認識ツールということで、無音部分をスキップする仕組みにした。そのため、SILENCE_からはじまる設定を変更することで無音判定の精度を向上させることができる。特に、(*3)のSILENCE_THRESHOLDの値を小さくすると、判定が緩くなり小さな音でも、音声として判定するようになる。

# オーディオ設定 --- (*2)

SAMPLE_RATE = 16000 # Whisperは16kHzに対応

CB_DURATION = 0.2 # コールバックのブロックサイズ(秒)

ASR_DURATION = 5 # 音声認識の時間(秒)

LANGUAGE = "japanese" # 言語設定(日本語)

SILENCE_THRESHOLD = 0.003 # 無音と判定するしきい値 --- (*3)

SILENCE_THRESHOLD_L = 0.01 # 長い音声データを無音と判定するしきい値

SILENCE_TIMEOUT = 1.0 # 無音が続いた時の音声認識実行までの時間(秒)

以下の(*4)の部分は、音声認識モデルを読み込んでパイプラインを初期化している。

# パイプラインの初期化 --- (*4)

# …省略…

pipe = pipeline("automatic-speech-recognition", model=MODEL, device=DEVICE)

print("### Whisperモデルをロードしました。")

以下の(*5)では、音声データを管理するキュー構造の変数を初期化する。今回、音声の録音と音声認識をリアルタイムに行うため、それぞれの処理を別々のスレッドで実行する。その際、処理が干渉しないように、スレッドセーフなキュー構造を使って、スレッド間で安全にデータをやり取りできるようにする。

# 音声入力のキューを初期化 --- (*5)

audio_q = queue.Queue()

それで、実際に音声を録音して、キューにデータを追加するのが、以下の部分だ。(*5)の部分で、sd.InputStreamメソッドを実行すると、録音がはじまり、一定の音声データを得ると、引数callbackに指定した(*6)の関数を呼び出す仕組みとなっている。そして、(*6)の関数が呼び出されるタイミングで、キュー構造の変数audio_qにデータを追加している。

def callback(indata, _frames, _time, status):

""" 音声入力のコールバック関数 """ # --- (*6)

# …省略…

audio_q.put(indata[:, 0].copy())

…

def main():

""" マイクから録音開始 """ # --- (*15)

# …音声入力…

try:

with sd.InputStream(

samplerate=SAMPLE_RATE, channels=1,

callback=callback,

blocksize=int(SAMPLE_RATE * CB_DURATION)):

while True:

time.sleep(1)

except KeyboardInterrupt:

print("<<< 終了しました。")

なお、上記では処理を分かりやすく見せるため、省略したのだが、main関数実行時に、音声認識のスレッドを実行している。asr_workerというのが、音声データaudio_qを受け取って、無音でなければ、音声認識を実行する処理を実行する関数だ。

threading.Thread(target=asr_worker, daemon=True).start()

そして、実際の関数asr_workerの定義は次のようなものとなっている。(*8)でキューから音声データを取り出し、(*9)でそれが無音かチェックする。一定期間、無音が続いたら強制的に音声認識を行うが、そうでない場合、(*10)で逐次音声バッファにデータを追加していって、バッファが一杯になった時点(*11)で、音声認識を実行する。

def asr_worker():

""" 音声認識を行うワーカースレッド """

buffer = []

silence_count = 0 # 無音の連続回数をカウント

while True:

data = audio_q.get() # --- (*8)

# 無音検出:取得した音声データが無音かチェック --- (*9)

if is_silent(data):

silence_count += 1

# 無音が一定時間続いたら音声認識を実行

if buffer and silence_count >= int(SILENCE_TIMEOUT / CB_DURATION):

# 音声認識を実行

total = np.concatenate(buffer)

perform_asr(total)

buffer.clear()

silence_count = 0

continue

# 音声データが検出されたら無音カウントをリセット

silence_count = 0

# バッファに音声データを追加 --- (*10)

buffer.append(data)

total = np.concatenate(buffer)

if len(total) < SAMPLE_RATE * ASR_DURATION:

continue

# 音声データが十分な長さになったら音声認識を実行 --- (*11)

perform_asr(total)

buffer.clear()

なお、音声データが無音かどうかを判定するのに、次のような関数is_silentを定義した。ここでは、(*7)にあるように、RMS(Root Mean Square)という手法で無音かどうかを簡易チェックしている。

def is_silent(audio_data, threshold=SILENCE_THRESHOLD):

"""音声データが無音かどうかを判定する関数"""

# RMS(Root Mean Square)を計算して音声レベルを判定 --- (*7)

rms = np.sqrt(np.mean(audio_data ** 2))

return rms < threshold

音声データを受け取って音声認識を行うのが、以下の関数perform_asrだ。(*12)で無音かどうかを再度チェックして、無音なら何もしないで関数を抜ける。(*13)では、音声データを一度テンポラリファイルに保存して、(*14)で音声認識を実行する。

def perform_asr(audio_data):

"""音声認識を実行する関数"""

# 無音かチェックして無音なら何もしない --- (*12)

if is_silent(audio_data, threshold=SILENCE_THRESHOLD_L):

print(">>> (無音)")

return

audio = torch.from_numpy(audio_data).float()

# 一時ファイルに保存して音声認識 --- (*13)

torchaudio.save(TEMP_FILE, audio.unsqueeze(0), sample_rate=SAMPLE_RATE, format="wav")

try:

# 音声認識を実行 --- (*14)

result = pipe(

TEMP_FILE,

generate_kwargs={

"language":LANGUAGE,

"task":"transcribe"})

# 結果を表示

text = ""

if result:

text = str(result.get("text", "")).strip()

print(">>> [音声認識]", text)

except Exception as e:

print(f">>> 音声認識エラー: {e}")

プログラムを実行するには、こちら( https://gist.github.com/kujirahand/3ec6b35ba27f58b1ac596cf8a2db9447 )のプログラムを「asr.py」という名前で保存して、次のコマンドを実行する。

python asr.py

なお、冒頭で紹介した手順に従って、venvの仮想環境上でライブラリをインストールしてから実行しよう。

プログラムを実行すると、録音が開始され、リアルタイムに音声認識が行われて結果が画面に表示される。

まとめ

以上、今回は、リアルタイムの音声認識ツールを作ってみた。わずか123行のプログラムで、ツールを完成させることができた。ここからも、PythonのAI関連のツールは、かなり充実していることが分かる。

とは言え、ある程度、PCのスペックが良くないとリアルタイムで動いているという感じにはならないかもしれない。しかし、Whisperを提供しているOpenAIは、低スペックのマシンでもWhisperの音声認識ができるように、有料のAPIを提供しているので、音声認識部分だけは、有料のOpenAIのAPIを呼び出すように修正することもできる。

リアルタイムの音声認識は、いろいろな用途で利用できるので、本稿を参考に、改良して本格的なものを作ってみると良いだろう。

自由型プログラマー。くじらはんどにて、プログラミングの楽しさを伝える活動をしている。代表作に、日本語プログラミング言語「なでしこ」 、テキスト音楽「サクラ」など。2001年オンラインソフト大賞入賞、2004年度未踏ユース スーパークリエータ認定、2010年 OSS貢献者章受賞。これまで50冊以上の技術書を執筆した。直近では、「大規模言語モデルを使いこなすためのプロンプトエンジニアリングの教科書(マイナビ出版)」「Pythonでつくるデスクトップアプリ(ソシム)」「実践力を身につける Pythonの教科書 第2版」「シゴトがはかどる Python自動処理の教科書(マイナビ出版)」など。